Most IT environments don’t become difficult to operate because of a single bad decision. They become difficult because of accumulation – and accumulation is almost always invisible until it isn’t.

A new security tool gets deployed. A compliance framework adds reporting requirements. A vendor platform introduces another administrative console. Each addition is defensible. Security improves. Visibility improves. The business gains something it didn’t have before. But very little gets retired. The legacy system that was supposed to come offline stays online a little longer. The reporting process that existed before the new dashboard remains because someone still depends on it. The environment becomes layered rather than replaced, and new obligations accumulate on top of old ones without any corresponding contraction in the underlying workload.

For the people operating these environments, the effect is subtle at first. Ticket volumes may not change. Infrastructure may even become more stable. But the amount of context required to keep everything functioning starts to grow. More systems interact. More dependencies appear. More small decisions are required about timing, risk, and tradeoffs. Eventually the work stops feeling like a series of tasks and starts feeling like constant coordination. That shift – from execution to continuous situational management – is where most of what gets described as burnout actually originates.

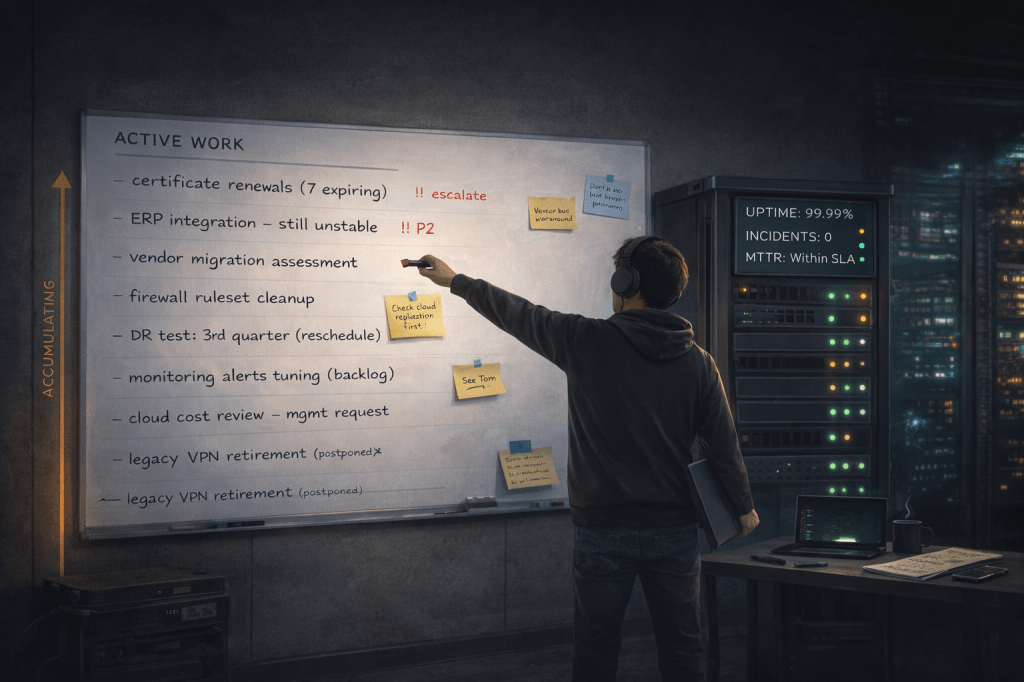

The Work That Doesn’t Show Up

There is a particular dynamic that accelerates this process and makes it difficult to see from outside the environment. Capable operators absorb instability. A script gets adjusted when something drifts. A configuration gets corrected before it causes an incident. A vendor quirk gets worked around. An integration that occasionally fails gets nudged back into place. None of these actions are dramatic, and most of them are invisible to the rest of the organization. The system continues operating. Users continue working. Leadership sees stability.

But stability, in these environments, is not a passive condition. It is an active output produced by ongoing judgment. And the effort required to produce it keeps increasing even when the visible signals stay calm.

This creates a structural distortion. When systems are running smoothly and incidents are rare, organizations reasonably interpret that stability as available capacity. New initiatives get added. Additional responsibilities appear. Another platform enters the environment. From the outside, the system still looks stable. From the inside, the effort required to maintain that stability has grown considerably.

Standard operational metrics compound the problem. Uptime percentages, ticket queue velocity, mean time to resolution – these tools measure what happens after something goes wrong. They track failure response. What they rarely measure is the judgment and situational awareness that prevented failures from reaching that threshold in the first place. When a dependency is caught before a change window begins, when a risky update is delayed until a safer rollback path exists, when an automation is paused before it collides with another process – none of that appears on a dashboard. The decision disappears into the background. The system holds, and nothing is recorded.

Over time, this visibility gap produces a distorted picture of operational health. Calm metrics look like available capacity. Organizations build on that assumption. And the gap between what the environment actually requires and what leadership believes it requires continues to widen.

What Accumulates Beyond the Ticket Queue

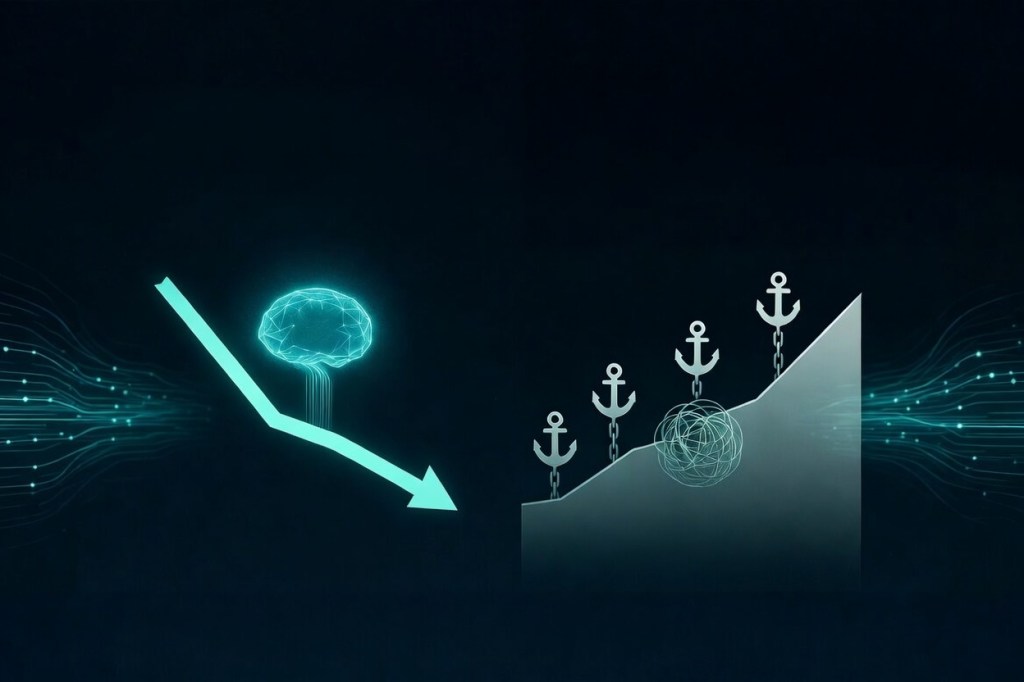

The most underexamined cost in complex IT environments is context burden – the growing mental map required to safely operate layered systems. Which system depends on which. Which change might ripple into another platform. Which workaround is still protecting a fragile integration. Which patch window must be sequenced around which upstream dependency.

This knowledge is rarely documented at the level of granularity that operations actually require. It lives in the awareness of the people doing the work. And as environments grow more layered, that mental map becomes larger and more demanding to maintain. Routine changes start requiring longer discussions than they once did. Teams become cautious about certain systems. Temporary fixes remain in place well past their intended lifespan. These are not signs of dysfunction or hesitation. They are signs that the environment has become cognitively heavy to operate.

The practical test is straightforward: if someone joined the team tomorrow, how long would it take them to safely operate the environment? Not to operate it well – just safely. When the honest answer is measured in months, and when that answer is primarily about absorbing undocumented context rather than learning documented systems, the environment is carrying a concentration of operational knowledge that belongs in structure, not in people.

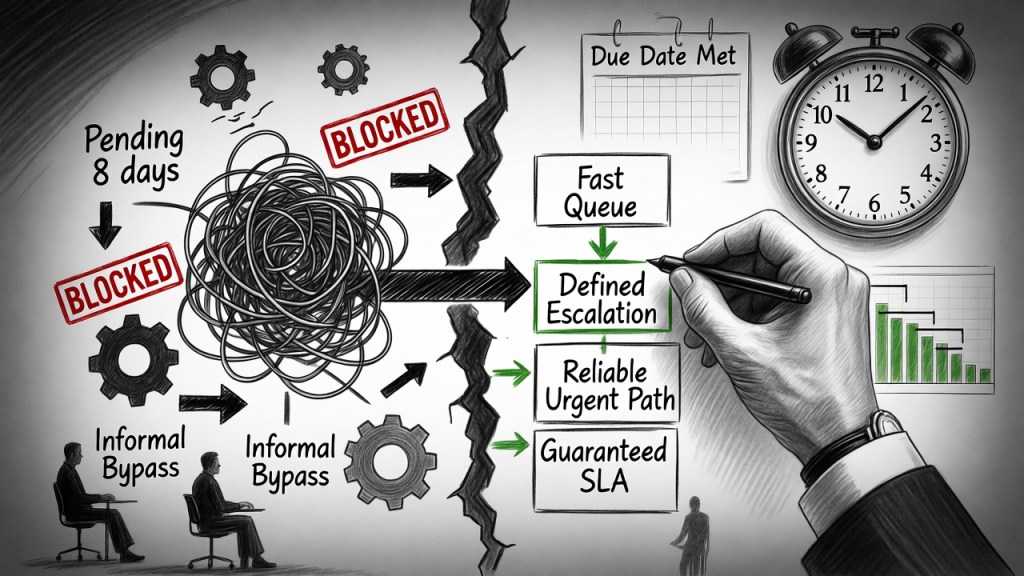

How Normal Expands

Underneath all of this runs a quieter problem. Stable systems create confidence, and confidence shapes expectations. When incidents remain rare and service metrics stay within expected ranges, it becomes natural to build on what appears to be working. A new platform is introduced to support a business initiative. Another integration is added. A reporting requirement expands because better data is now available. Each of these decisions is reasonable in isolation.

But embedded in many of them is an assumption that rarely gets examined: if the system is stable today, it must have room to absorb more tomorrow.

Over time, that assumption shifts what the organization considers normal. Responsibilities that once required careful coordination become routine. Systems that once needed deliberate change management become expected to accommodate faster updates. The definition of what the environment should handle expands gradually, through dozens of additions that were never evaluated together. Expectations drift faster than capacity expands, and the environment doesn’t fail immediately. It simply becomes heavier to operate.

This is distinct from growth. Growth brings new capability with some proportionate increase in the resources and structure required to support it. Expectation drift brings new demands against capacity that was already being fully utilized – and does it quietly, across enough decisions and enough time that no single moment looks like the cause.

What Leadership Actually Requires

Recognizing these patterns is not an argument against complexity or growth. IT environments will evolve. Systems will layer. Organizations will make demands that weren’t anticipated when the infrastructure was designed. The question is not whether that happens. The question is whether the relationship between capacity and expectation stays visible to the people responsible for managing it.

That visibility doesn’t come from dashboards alone. It comes from paying attention to a different category of signal. When the same expertise is required repeatedly to stabilize different incidents, that’s a signal. When routine changes require deeper discussion than they once did, that’s a signal. When teams avoid certain parts of the environment because the risk feels unpredictable, that’s a signal. None of these are dramatic. Together, they indicate that the system may still be stable while the effort required to keep it stable is increasing.

The leadership questions that follow from this awareness are structural rather than operational. Which constraints should be retired rather than managed? Which systems should be simplified instead of layered upon? Which expectations should be adjusted to reflect the capacity that actually exists, rather than the capacity the environment appeared to have during a period of high performance? These questions rarely produce immediate answers. But they change the trajectory of the environment over time.

Effort scales poorly. Structure scales better. Teams can compensate for structural strain through skill, dedication, and reliability for extended periods. That compensation is genuinely valuable. But when stability depends entirely on those qualities, rather than on the architecture of the environment itself, the system is more fragile than it appears. The metrics will stay calm right up until they don’t.

Most operational strain in IT does not arrive suddenly. It accumulates through reasonable decisions, capable people absorbing friction they shouldn’t have to carry indefinitely, and an organizational tendency to interpret stability as headroom. Watching the relationship between capacity and expectation – not just the incidents it eventually produces – is where technical leadership starts to separate from technical execution.

Version 1.1 – Revised March 2026