There is a category of operational risk that most IT environments carry without formally acknowledging it. It does not appear in incident reports because it has not caused an incident yet. It does not appear in capacity planning because the cost it extracts is not categorized as a cost. It shows up instead as hesitation, as manual intervention, as the institutional knowledge concentrated in one person that everyone quietly hopes does not leave. Most practitioners recognize it immediately. Most organizations do not have a clean way to discuss it.

Technical debt is the standard term, and it is accurate enough for internal use. What it tends to obscure is the nature of the obligation. Debt implies a clean ledger, a known balance, a defined repayment structure. What actually accumulates in most environments is less organized than that. It is a series of decisions that were rational at the time and never revisited, layered with non-decisions that accumulated the way pressure accumulates (gradually, then all at once when conditions shift).

The workaround that became permanent infrastructure. The integration that nobody patched because patching it breaks something else. The process held together by one person’s memory of an exception that happened three years ago and was never documented. These are not failures of intelligence or diligence. They are what happens when organizations consistently reward execution over accountability: when closing the ticket matters more than closing the loop.

What the Signals Are Actually Telling You

The signals that indicate accumulated debt are rarely dramatic. A system nobody wants to touch is one. Not because it is broken, but because it works, and everyone has absorbed the intuition that touching it carries risk they cannot fully quantify. That intuition is itself a signal. When the tacit knowledge required to operate a system safely exceeds what is written down about it, the organization has a structural problem, regardless of whether that problem has surfaced as an incident.

Single-person dependencies are a related pattern and probably the most dangerous form debt takes. When the answer to “how does this work?” is a specific person’s name rather than a process or a document, the organization has allowed continuity risk to concentrate in individual memory. This is not about blaming that person. They did not create the dependency alone. What created it is an environment where building durable understanding was never prioritized over immediate output. The person who knows how the system works is doing their job. The organization failed to build the conditions that would distribute that knowledge over time.

The carrying cost is the signal that connects all of the others. When a team spends meaningful hours each week manually compensating for processes that should run without intervention (restarting services, validating outputs, handling exceptions that should be handled by the system) those hours are the debt payment. They do not appear on a budget line. They appear as capacity that does not exist for anything else. Capacity planning conversations that ignore this are not capacity planning. They are a projection built on an incomplete picture of what the team is actually doing.

The Translation Problem

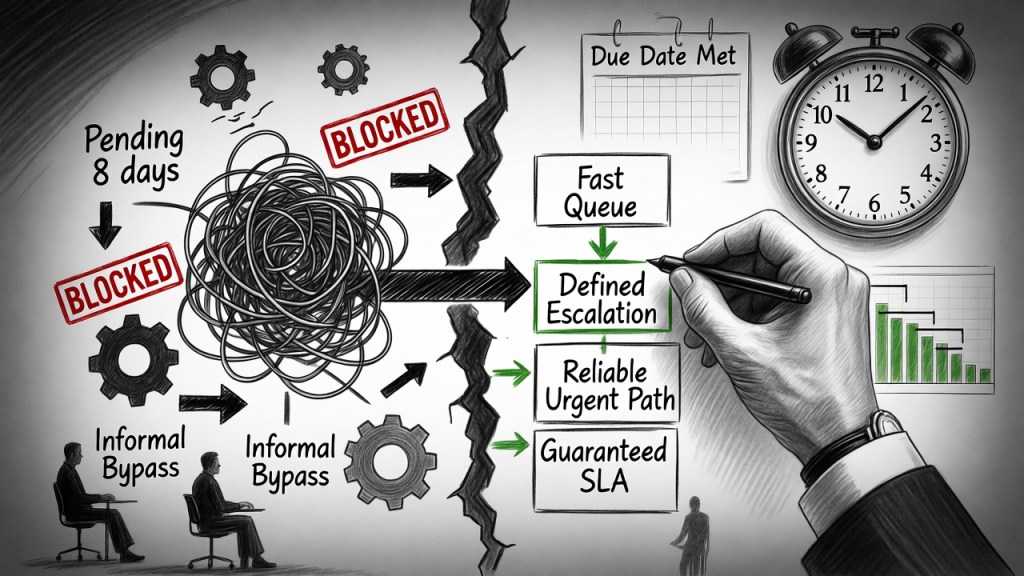

Most IT professionals who have tried to raise these issues with leadership have encountered a version of the same outcome. The conversation ends with acknowledgment and inaction. The temptation is to interpret this as indifference. Often it is not. It is a translation failure.

“Technical debt” lands in a budget meeting as a complaint about old technology, or a request for upgrades that can be deferred. It does not carry the weight of risk that the practitioner intends when they use the term. The frame that tends to be more productive is the frame of business exposure. Not because leadership needs to be managed, but because business exposure is the accurate frame. An undocumented integration that one person knows how to restart is not a technical inconvenience. It is a continuity risk with a defined blast radius. A critical system that has not been patched because patching it breaks something else is not a maintenance backlog item. It is a recoverable failure with an unknown trigger timeline.

The specifics of translation matter less than understanding what is actually being communicated when the technical framing fails. It is not that leadership cannot understand the problem. It is that they cannot evaluate risk they cannot picture. When the conversation shifts from describing technical complexity to describing operational exposure (what becomes more likely, what recovery looks like, what the team is currently spending to prevent the failure from surfacing) the response tends to change. Not always toward action, but at least toward comprehension.

What weakens these conversations structurally is comprehensiveness. A full inventory of everything aging, fragile, or undocumented gives leadership a laundry list. A laundry list produces prioritization paralysis. The more productive structure is narrower: two or three items that represent meaningful exposure, described in terms of likelihood, recovery time, and carrying cost. The goal is not to educate leadership about the technical environment. The goal is to give them enough clarity about specific risk to make a decision about it.

After the No

A deferred decision is the most common outcome of a well-executed debt conversation, particularly the first time an organization engages with the issue seriously. Understanding this does not resolve the frustration of it, but it does clarify what a professional response looks like.

The risk that was raised does not disappear when the budget decision ends. If anything, a documented conversation where the exposure was communicated and action was deferred has a different character than a risk nobody knew about. It clarifies accountability. When something does eventually fail (and deferred debt tends to surface on its own timeline regardless of organizational preference), the record of what was raised, what was communicated, and what decision was made matters. This is not about building a grievance file. It is about maintaining clarity on where the accountability actually sits.

Where mitigation is available within existing constraints, pursuing it is both practical and professionally correct. Full remediation off the table does not mean partial remediation is off the table. Better documentation. A second person with operational knowledge of a critical system. A tested recovery procedure for something that has never been tested. None of this resolves the underlying structural problem, but it changes what a bad day looks like when the debt eventually surfaces, and it demonstrates the kind of judgment that distinguishes practitioners who manage risk from practitioners who just report it.

The posture that corrodes both the practitioner and the organization is the one that emerges from repeated deferral without acknowledgment: the decision to stop raising issues, to let the system carry its own weight until it cannot, to be right about the failure rather than useful before it. That posture is understandable as a response to accumulated frustration. It is also a form of judgment erosion. It trades the capacity to shape outcomes for the satisfaction of having predicted them.

What This Is Really About

Technical debt is a structural signal. What it signals is not primarily about technology. It is about how an organization handles the gap between short-term execution and long-term accountability: whether decisions get closed or just made, whether risk gets distributed or concentrated, whether the people closest to the system are given the authority and the structure to manage it well over time.

The practitioners who navigate this well are not the ones who eliminate all accumulated compromise from their environments. That is not a realistic outcome in any complex operational setting. They are the ones who see the debt clearly, communicate it accurately, manage it responsibly within their authority, and maintain the professional posture to keep making the case even when the immediate answer is no. That is what operational judgment looks like when it is functioning as a durable asset rather than a consumable one.