1. Problem Statement

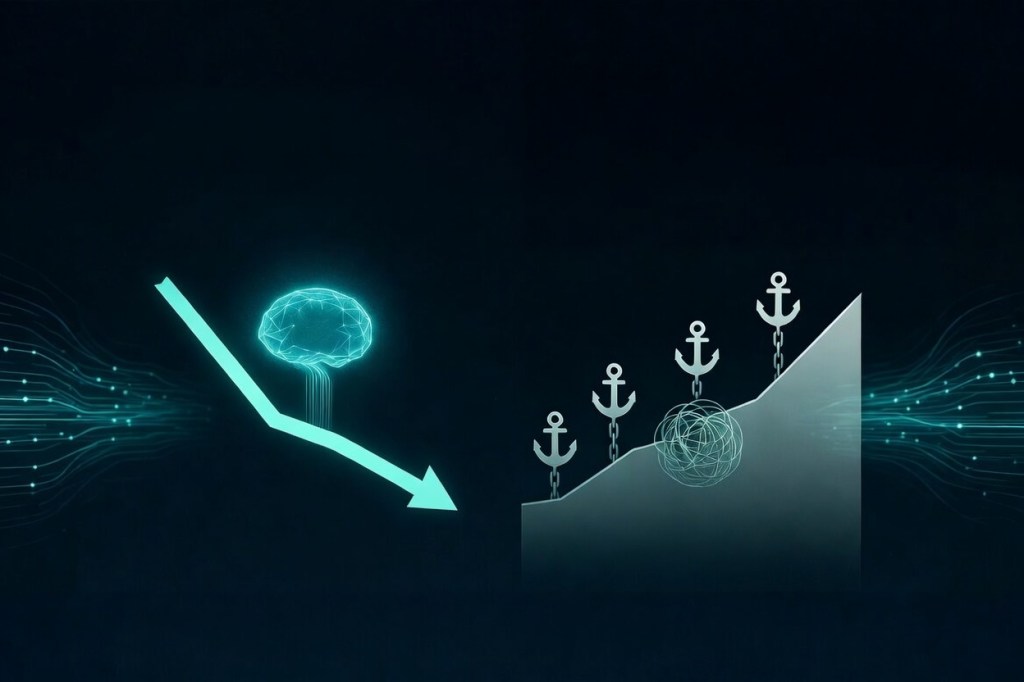

As AI systems evolve toward distributed, multi-agent, probabilistic architectures, traditional identity-based authorization becomes structurally insufficient.

Current models assume discrete human actors, session-based interactions, and centrally issued durable authority. Emerging systems violate all three assumptions.

The resulting gap is structural: identity answers “who are you?” but cannot answer “what are you trying to do right now, and does the context support it?”

2. Proposed Direction

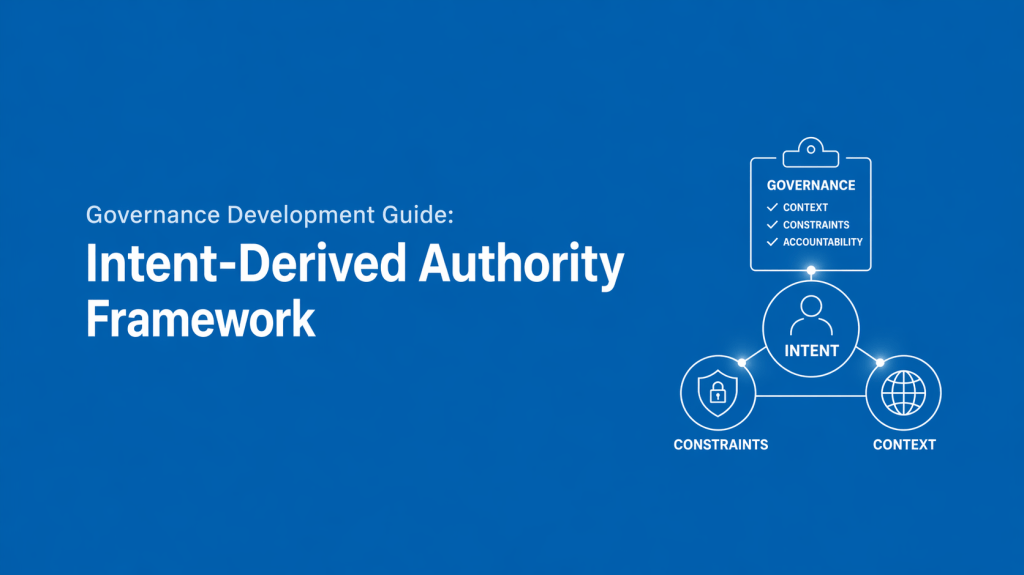

Shift authority from static grants to execution-time derivation:

Authority = f(Actor, Inferred Intent, Context, Constraints)

Key properties:

- Authority is assembled, not issued

- Authority is ephemeral (exists only at the moment of evaluation)

- Evaluation is continuous and probabilistic at the point of execution

- Enforcement is inline at execution

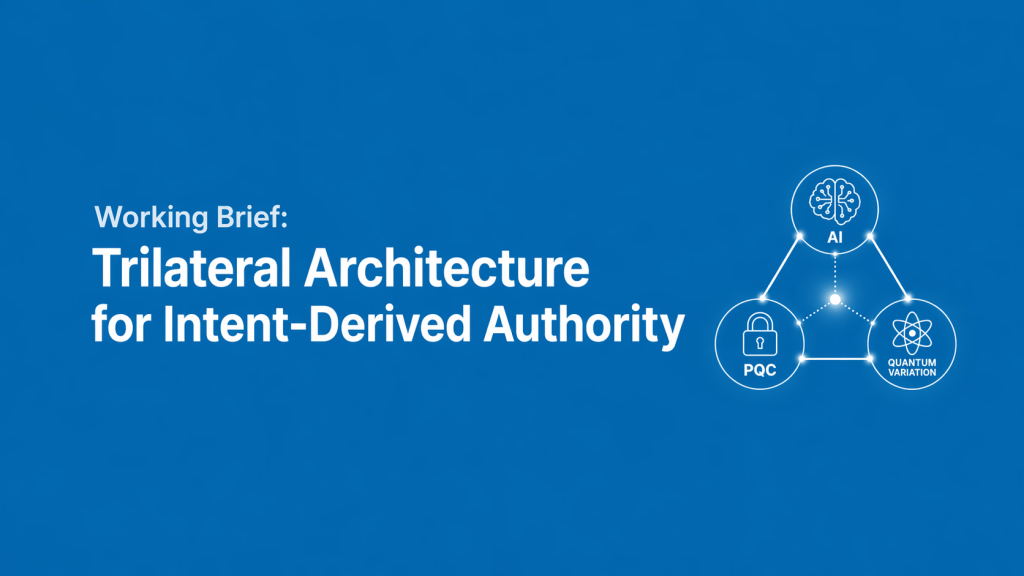

3. Architectural Hypothesis: Trilateral Model

The model rests on three structurally interdependent layers, each performing a role the others cannot:

3.1 Quantum Computing – Variation Engine

A BVSR-aligned inference process must explore a large, genuinely “blind” space of possible intent interpretations in real time. Quantum parallelism is hypothesized as the only currently known mechanism capable of maintaining sufficient variation scale and unpredictability within authorization-time constraints. Without it, the variation space collapses into predictable patterns that adversaries can map.

3.2 Artificial Intelligence – Selective Retention Mechanism

AI evaluates candidate interpretations against observed behavior, contextual signals, and governance constraints, then selects the highest-fitness interpretation. This replaces rules-based authorization with probabilistic, context-aware selection.

3.3 Post-Quantum Cryptography – Integrity Layer

PQC cryptographically assures the authenticity of inputs, the integrity of the inference pipeline, and non-replayability of decisions. It prevents the inference engine itself from becoming the new attack surface.

4. Structural Dependency

Remove any one layer and the system fails:

- No Quantum → variation becomes constrained and predictable

- No AI → no contextual selection mechanism

- No PQC → inference pipeline is corruptible

This is not a stack of enhancements. It is a dependency chain.

5. Core Open Question (Primary Focus for Collaboration)

Inference Integrity: If authority is derived from inferred intent rather than verified identity, how do we ensure the inference process itself remains trustworthy under adversarial conditions?

This replaces the classical problem of identity theft with the harder problem of intent-inference manipulation. If this cannot be solved, the model is not viable regardless of variation or selection capability.

6. Objective of Collaboration

This brief is not a request for full system design. It is an invitation to pressure-test the trilateral hypothesis at the PQC-AI boundary.

Specific questions:

- Is the three-layer dependency structurally valid?

- Where does the inference-integrity model break down?

- What existing work (if any) already approaches this intersection?

- Which assumptions are incomplete or incorrect?

7. Framing

This is not a replacement for identity systems and not a commercial product proposal.

It is a candidate framework for deriving and governing authority at execution time in systems operating under probabilistic, context-driven conditions the governance layer required to make QC-AI systems operationally viable.

For more information, contact me via LinkedIn

Posted: 04/17/2026 – 03:54:00pm CDT